Hexagonal Vibes

Teaching new dog(s pack) old tricks! A case study in using old tricks in architecture to keep new AI tools from eating their own tail.

I keep bees. I like it, bees are amazing to observe, and you get a sweet reward after. One thing people get wrong about bees: they are not captive. They can leave anytime they want. They mostly choose to stay. The safety of their wooden box and my care are a better fit than full freedom, but let's not get philosophical.

The other thing people get wrong is the shape of the frames, the beautiful hexagons (hexagon is the bestagon). They're not the beekeeper's idea, but not exactly the bees' idea either. Wild hives look different from human-maintained ones. The hexagonal frames are somewhere between a seed structure (wax that we remelt into a hex foundation) and the bees' own design, building on top of them within a constraint. Think of it as a template. And bees like them. This is why sometimes they'll populate an empty hive all by themselves (yay!) and they build faster and straighter than they would on their own.

I just lived through the software version of it.

Three Projects, One Emerging Pattern

I picked up Claude Code a month ago. I wanted to check the vibes for myself.

My first project was a single HTML file, something I’ve done a bunch of times using the Claude app. An interactive interview prep toolkit for a junior Scrum Master I’m coaching. Clear need: job interview. It included a few tabs, some checklists, the STAR story builder, a practice timer, but also inline CSS and JavaScript, localStorage for persistence, and an option for her to save her answers and send them to me in a single text file. Claude one-shot it. One session, pure vibes, and it worked! No architecture needed. One file, single purpose, done.

Second project was similar, a simple HTML dashboard for me to review my latest files and sort through them, with a small Python script working in the background to rename and organize. Same deal. One session, pure vibes, worked. This personal-software-on-demand future seems bright.

Third project was a game.

Tattered Banner Tales, a browser-based D&D-inspired tactical turn-based combat game. FastAPI and Python on the backend, React and TypeScript on the frontend, WebSocket for real-time multiplayer, SQLite, Docker Compose, all deployed on a Hetzner VPS that costs me €4.51 a month.

Sessions 1–2: Pure Vibes

The v1.0 MVP shipped in two intensive Claude sessions, maybe sixteen hours total. Four hero classes, eight monster types (I drew the icons myself), seventeen spells, three battle maps, spectator mode, and a replay system with deterministic seeded RNG. I was proud to put a state machine in there from the start, he he. And forty passing tests. Cold start to working game, in the background of my day job.

The vibes were incredible. Dopamine satiated and excited!

I logged it simply: “Pure build mode, no architecture thinking. Proved the concept works.” I got hooked!

Session 4: “I Know This One!”

The game kept growing. By the fourth session I was adding a multi-section website around the combat engine: contact page, tales section (lore), navigation. And I looked at what was happening and thought: I know this one! I’ve seen it before!

I didn’t come to this by accident. I’m happy to call myself a friend of Alistair Cockburn for the past seven years or so. Alistair formalized Hexagonal Architecture in 2005, Ports and Adapters as it’s often called. The pattern that separates what your application does from how it talks to the world. Now, he wouldn’t call me his mentee. He doesn’t want followers. His philosophy, which I share, is closer to two hermits sharing a fire and exchanging stories. No teachers, no gurus, no masters. But seven years of being in this man’s aura and studying his work left a mark! Crystal Clear. Hexagonal Architecture. Heart of Agile. These are the patterns I live in and try to teach.

The evidence was sitting in a single file: session_manager.py, 842 lines. It was the God Object. Game state management, WebSocket connections, SQL queries scattered throughout, broadcast logic, event persistence, everything tangled together. Every time I asked Claude to add a feature, it would reach into that file and wire new logic directly into the (beautiful) mess. It worked, technically. But each session, the context window filled with more noise.

My iteration log for session 4 reads: “Multi-section site + ports & adapters pattern established. This was the ‘we’re not just hacking this’ moment. Every feature after this follows the port → adapter → container → router pattern.”

Quick explainer: In Hexagonal Architecture, your application’s core logic lives inside a “hexagon.” It doesn’t know about databases, web frameworks, or APIs. It talks to the outside world through Ports (interfaces that define what the app needs) and Adapters (implementations that provide how). Want to swap SQLite for Postgres? Change the adapter, not the core. Want to test without a database? Use an InMemory adapter. The boundaries are the whole point. Cockburn’s original article is the definitive source.

Now, this wasn’t an experiment I planned. My pattern recognition kicked in. This is exactly what hexagonal architecture solves! I’ve seen it in companies I worked with (it’s really popular with some senior engineers in Serbia :D) and I could see a place for it now.

The Refactor

Between v0.1.1 and v0.4.0, I extracted the monolith into hexagonal boundaries. The 842-line God Object got decomposed:

Step 1 pulled out two driven ports: GameRepository (10 methods) and PlayerNotifier (4 methods). Each got a real adapter (SQLite, WebSocket) and a test adapter (InMemory). SessionManager went from calling get_db() directly to receiving its dependencies through constructor injection. Before: untestable without a running database and WebSocket server. After: full session lifecycle testable in under 100 milliseconds.

Step 2 added ContactRepository and TalesRepository for the new website sections. These followed the pattern from day one: port, adapter, wiring, done. Then ContentRepository to get CSV-backed game data (heroes, spells, monsters, maps) behind a port instead of importing seed.py directly.

Step 3 brought the router evolution. The routers had been importing adapter instances directly (from app.container import contact_repo). That still violates hex. Refactored to FastAPI Depends() injection: routers declare a port-typed parameter, the composition root provides the implementation. Swapping SQLite for Postgres changes one line in container.py and nothing else.

The numbers: 8 ports, 11+ adapters. All wired through a single composition root. Tests went from 40 to over 300, all running in under 0.1 seconds. No mocks, mind you, real InMemory adapters that exercise actual logic.

Where the Vibes Went Off (and Why)

You probably know the term “vibe coding.” Andrej Karpathy coined it in February 2025, and by March 2025, 25% of Y Combinator’s Winter cohort had codebases that were 95% AI-generated.

My first two projects proved (to me) that vibes work. My third project shows when the vibes go off, as the kids would say: when complexity exceeds what one context window can hold. When one file becomes ten, ten become fifty, and the AI can’t remember its own architecture. Or more likely, the lack of one.

OX Security analyzed 300+ repositories in October 2025. They named their report “The Army of Juniors.” They found the “Return of Monoliths” in 40–50% of AI codebases and “Fake Test Coverage” at similar rates. CodeRabbit’s December 2025 report analyzed 470 real-world pull requests; AI code produces roughly 8x more excessive I/O operations than human code. Veracode tested 100+ LLMs: AI-generated code introduces vulnerabilities 45% of the time, consistent across model sizes.

It’s not that AI writes bad code. It just writes structurally incoherent code at superhuman speed. Or rather, it makes the same mistakes humans do, but very fast.

Session 13: The First Swarm

With the hexagon in place, I got curious. What happens if I run multiple AI agents in parallel?

Session 13 was a conditions sprint: bleeding, poisoned, stunned, that kind of thing. Four agents working at the same time. And this was the session that taught me that agents need explicit scoping. Not just “you work on backend,” they need file-level ownership. Without it, two agents will cheerfully edit the same file and produce a merge conflict that neither understands.

That led to AGENTS.md, the agent team rules. Five specialized builders, each with exclusive files:

Engine — Backend combat rules — engine/state.py, engine/rules.py, engine/ai.py

UI/UX — Frontend components — components/ActionPanel.tsx, styles/tbt-theme.css

Mobile — Responsive + touch — components/BattleGrid.tsx, pages/Battle.tsx

Infra — Docker, deploy — docker-compose.yml, requirements.txt, app/main.py

First Responder — QA (read-only) — Reads everything, writes only to FIRST_RESPONDER_REPORT.md

Plus a Team Lead who coordinates without writing code. (Yes, I basically reinvented a project team. More on that in a moment.)

The file-lock rule is simple: a teammate must not modify files owned by another teammate. Cross-boundary changes require the owning teammate to make the change. And here’s the thing, the hexagonal boundaries made this natural. The Engine agent sees Python, game rules, and port interfaces. The UI/UX agent sees React components. Neither holds the other’s domain in memory.

Anthropic’s context engineering guidance puts it well: agents should “assemble understanding layer by layer, maintaining only what’s necessary in working memory.” Chroma Research’s “Context Rot” study explains the math: LLM accuracy actively degrades as the context window fills, even within technical limits. You don’t want to fit more in. You want to need less.

The hexagonal architecture gives each agent its own wax frame to build on. The architecture is the instruction.

Session 18: Muscle Memory

This is where the pattern clicked for the AI, not just for me.

EmailSender, the 7th port, shipped in one session. Port definition, three adapters (SendGrid for production, LogOnly for local dev, InMemory for tests), router changes, ten new tests. Done. The hex arch pattern was now muscle memory for Claude. The context window wasn’t learning from zero each time, it was executing a known playbook.

By session 21, the Bard system shipped: TaleGenerator port (the 8th), two adapters (a Claude API adapter with a Roger Zelazny voice prompt, because why not, and a template fallback), plus a pure domain tale_summarizer.py that transforms raw combat data into structured summaries. Fifty-six new tests in one session. Over 200 total.

This is the compounding effect. Each port you define makes the next one faster. The AI doesn’t need to figure out where things go, the architecture tells it. Structure attracts methodology.

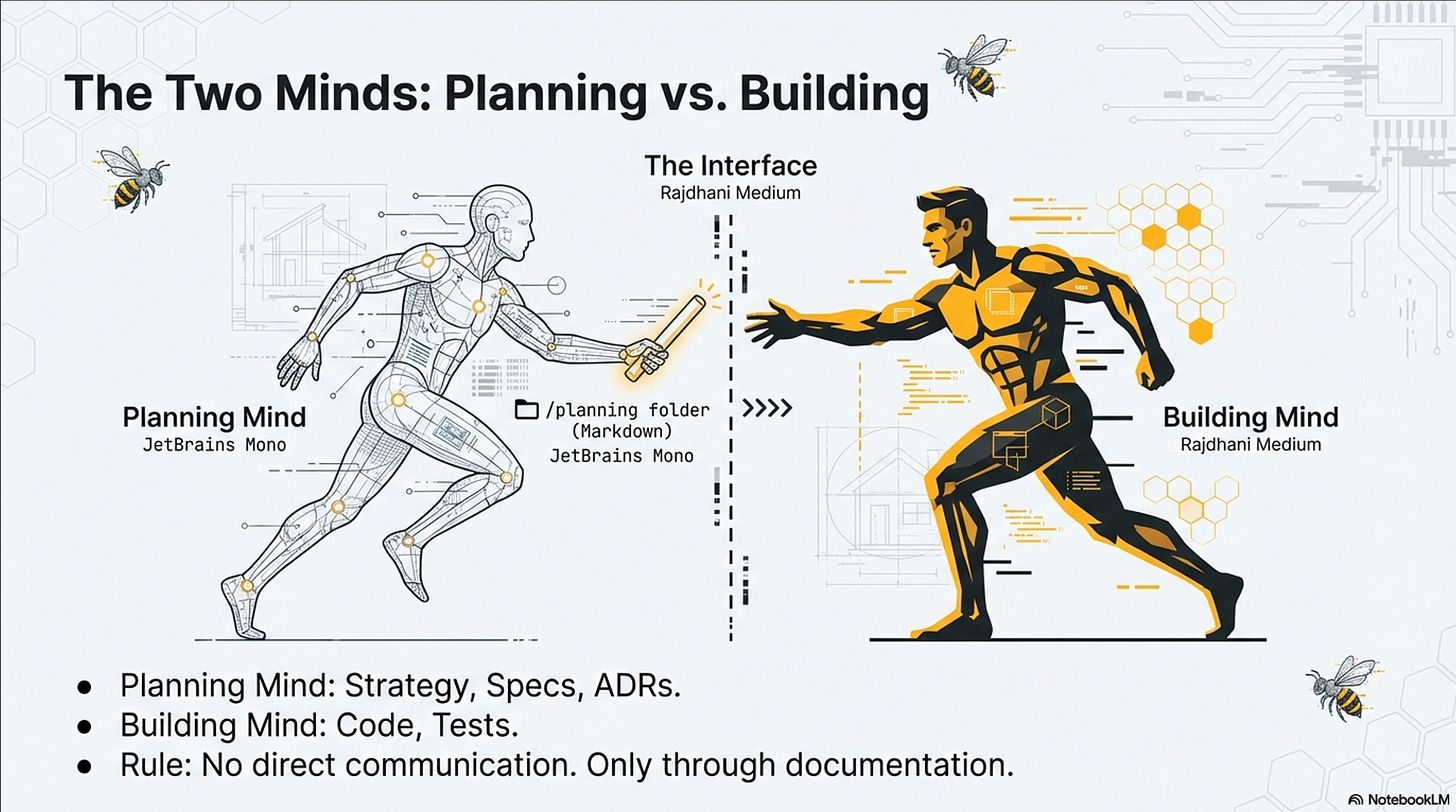

The Dual-Boundary System

Bounding the code isn’t enough. You also need to bound the knowledge that flows between sessions.

The Bridge File. CLAUDE_PROJECT.md gets updated at the end of every development session. Current version, module status, hex architecture overview, file map, decisions, session log. Instead of re-uploading thirty files, I re-upload one. Thirty seconds of context-setting between sessions. By session 17, I was reviewing nine Codex branches in a single session (eight merged, one new) because the agent had clear rules in AGENTS.md and accurate context from the bridge file. It sounds like housekeeping, but it’s actually the most impactful thing I do.

CSV-Driven Content. All game content (heroes, monsters, spells, maps) lives in CSV files behind the ContentRepository port. Independent benchmarking by Piotr Sikora shows CSV achieves 55–75% token reduction compared to JSON. Every token saved is context window preserved for actual reasoning.

Test-Driven AI Development. The refactored workflow: (1) Human defines the port, a Python Protocol. (2) AI implements the InMemory adapter. (3) AI writes tests against it. (4) AI implements the production adapter, verified against the same contract. Research shows TDD yields 12.78% improvement on code generation benchmarks, with test-driven workflows showing 45.97% average improvement across all LLMs tested. I didn’t adopt TDD for philosophical reasons. I adopted it because the AI produced better code when I gave it a contract instead of a description.

The First Responder. This one’s my favorite. An Opus-tier AI with read-only access. It doesn’t write code. It plays the game: walks the new-player flow, plays three PvE encounters as different classes, opens two tabs for a PvP duel, and writes a brutally honest report. Does this work? Is this fun? Where did I get confused? No diplomacy. Just QA from an AI that has no investment in the code being good.

“I Keep Reinventing the SDLC”

By session 26, I had a realization that made me laugh out loud.

I’d wanted to test the game with GPT’s agent mode, point it at the live URL and let it play as a browser-controlling agent. Inner Ring of Trust validation: does the combat engine produce interesting decisions? Before launching it, the obvious question: “Which of my files should I give it so it understands how to play?”

The answer was: none of them. Every document in the project assumed the reader was building the game. Not one assumed the reader was playing it. Fifteen process and documentation files. Zero user documentation.

And that’s when I said it out loud: “I keep reinventing the SDLC.”

Vibe coding’s core proposition is that you skip the ceremony. No PRDs, no sprint planning, no formal docs. But what actually happened across 25 sessions was that every SDLC artifact got created anyway, not because of process discipline, but because the need surfaced organically:

Requirements? The Wardley map appeared in session 10 when I realized features had no strategic priority.

Architecture doc? Hexagonal architecture emerged in session 3 when the monolith became untestable.

Test plan? The First Responder Report was created in session 13 when the agent swarm exposed eight bugs.

User documentation? Discovered missing in session 26 when a non-developer consumer couldn’t use the product.

Vibe coding doesn’t eliminate process. It defers it until the pain of not having it exceeds the cost of creating it. The documentation was always needed; the question was just when the need became undeniable. Every PM reading this is nodding right now.

Session 24: Compound Interest

A light/dark theme toggle. Pure frontend, no new ports. I specified exact hex values for every CSS token, and Claude executed a ten-file change in one session with zero rework.

Why did that work? Because in session 4, I’d established a CSS variable architecture, var(--tbt-*) design tokens. Twenty sessions later, adding a theme toggle was mostly defining a second set of values under [data-theme="light"]. Twenty sessions of compound interest on one early structural decision.

My iteration log note: “Architectural decisions compound. The CSS variable system was a v0.3.0 choice (session 4) that made a v1.5.1 feature (session 24) trivial.”

That’s the case study in one sentence. Structure compounds. Every session inherits what the previous sessions built, not just the code, but the architecture of the code. And when your implementer is an AI that starts each session with a fresh context window, that inherited structure is the only thing standing between you and entropy.

What I Found When I Went Looking

I built all of this on instinct first. Then I went looking for whether anyone else had arrived at the same place. Turns out, yes, from multiple directions.

The Skeleton Architecture on InfoQ (February 2026) uses Template Method instead of Ports and Adapters but arrives at the same golden rule: minimize the scope the model must hold in working memory. The “Maria” platform (arXiv, 2026), a production healthcare AI system, uses Clean Architecture with Event-Driven Architecture. Zero percent API failure rate, and swapping an LLM provider is an infrastructure change, not a rewrite of core logic.

Harvard’s “Modular Imperative” hit me hardest. LLMs consistently violate modular principles by default, but they excel when architectural constraints are explicit. The models aren’t incapable. They just won’t do it unless the structure forces them to.

And spec-driven development: GitHub’s Spec Kit and Red Hat Developer show that formal specifications significantly reduce AI hallucinations. Hexagonal port interfaces are executable specifications. I didn’t know the term when I started. I just knew that Python Protocols produced dramatically better results than natural language descriptions.

Three Frameworks, One Game

TBT became a convergence point for three frameworks I’d been carrying separately.

Hexagonal Architecture from Alistair Cockburn gave me the code boundaries: ports, adapters, the separation of what the app does from how it talks to the world. I’ve described it enough by now, I think.

Wardley Mapping from Simon Wardley gave me the strategic vocabulary. I follow his work closely; and he is very vocal about AI usage, the worth the read vocal - check out his LinkedIn. In a world where AI ships features in hours, roadmaps stop making sense. I needed a way to see where each component sits in its evolution and what to invest in next. I’ve been developing an alternative to roadmaps I call Living Rings (concentric circles built on Wardley Maps) but that’s an article on its own. The Wardley Map is now built into the project: AGENTS.md tells AI agents to read the map before prioritizing work, and to never modify it directly.

Clean Language and Systemic Modeling from Caitlin Walker gave me a way to think about the prompts themselves. Caitlin founded Systemic Modeling. I hope to work with her on something I’m calling Clean Prompting, applying Clean Language principles to human-AI interaction. If the architecture bounds the code, clean prompting bounds the conversation. Still early. I’m sharing it with Caitlin ashe’s already pursuing related ideas.

Three thnought leaders as inspiration. Three frameworks to support growing project needs. One D&D tactical combat game built by AI agents on a €4.51/month VPS, assembled in evening sessions by a guy who was a circus acrobat before he became a program manager.

Where I Am Now

Tattered Banner Tales is at v1.10.0. Thirty-four development sessions logged. Battle Arena, Map Builder, and Bard System all live. Over 300 tests passing. Eight ports, eighteen adapters. PixiJS WebGL renderer just swapped in under the hood. Deployed and running.

// Since the time i wrote this article ‘till the time i gathered courage to post it, Tattered Banner Tales is at v2.2.0… hope to be much more prompt in future (hehe pun intended).

I’m not pretending this is airtight. The automated enforcement is weak; boundaries are convention, not compile-time checks. I don’t have controlled metrics comparing bounded versus unbounded on the same project. Organizational scale is an open question. And I still catch myself deferring things I probably shouldn’t.

But the convergence across independent research is hard to ignore. The developer role is shifting, from writing code to designing constraints. Context engineering is the new performance optimization. And the patterns already exist. Hexagonal Architecture is twenty years old. DDD is older. The insight isn’t that we need new patterns. It’s that old patterns become more important when the implementer has no inherent sense of architectural coherence.

Over to You

This is my first Substack post. I’m not here to sell a framework. I’m here to test a thesis in the open, so feel free to be candid!

The thesis: architectural boundaries like Hexagonal Architecture provide the optimal structural framework for AI-assisted development. Old pattern, new context. The bees already knew.

If you’re building with AI agents and the walls are closing in, I want to hear what you’re seeing. If you’ve found a different way to keep the structure standing, tell me what it is. If you think I’m wrong, especially if you think I’m wrong do say where, i will apreciate it.

I vibed first. My training kicked in. The hexagon held.

Tattered Banner Tales is an ongoing case study, a D&D 5e tactical combat game built with hexagonal architecture and AI agent teams.

Djordje Babic is CEO of Hivemind d.o.o. and Staff Technical Program Manager at Rivian. He’s been friends with Alistair Cockburn (creator of Hexagonal Architecture and co-author of the Agile Manifesto) for seven years and is a Heart of Agile delivery partner. He met Caitlin as a facilitator and started implementing Clean Language into every day work (with humans at the time), He hosted her Workshop in Belgrade. Djordje is an admirer of Wardley maps, and altho he never met Simon Wardley he follows his work with great interest.

Before all of this, Djordje was a circus acrobat. He is of bee keeping age. This is his first Substack post.

References

Vibe Coding — The Problem Space

Karpathy, A. “Vibe Coding.” February 2025. Wikipedia

TechCrunch, “A quarter of startups in YC’s current cohort have codebases that are almost entirely AI-generated.” March 2025. Link

OX Security, “The Army of Juniors: The AI Code Security Crisis.” October 2025. Link

CodeRabbit, “State of AI vs Human Code Generation Report.” December 2025. Link

Veracode, “GenAI Code Security Report.” October 2025. Link

Context Windows & Token Optimization

Chroma Research, “Context Rot.” 2025. Link

Anthropic, “Effective Context Engineering for AI Agents.” September 2025. Link

Sikora, P., “TOON vs TRON vs JSON vs YAML vs CSV for LLM Apps.” December 2025. Link

Architectural Patterns for AI

InfoQ, “Working with Code Assistants: the Skeleton Architecture.” February 2026. Link

Lopes et al., “Maria: A Case Study in Architecture, MLOps, and Governance.” arXiv, 2026. Link

Harvard, “The Modular Imperative: Rethinking LLMs for Maintainable Software.” 2025. Link

Singh, V., “Ports & Adapters for AI.” LinkedIn, November 2025. Link

Cockburn, A., “Hexagonal Architecture.” 2005. Link

Test-Driven AI Development

Mathews et al., “Test-Driven Development for Code Generation.” arXiv, February 2024. Link

Fakhoury et al., “LLM-Based Test-Driven Interactive Code Generation.” arXiv, 2024. Link

SAS Communities, “Vibe Coding with Generative AI and Test-Driven Development.” June 2025. Link

Multi-Agent Development

Willison, S., “Embracing the parallel coding agent lifestyle.” October 2025. Link

VS Code, “Your Home for Multi-Agent Development.” February 2026. Link

The compounding effect from session 4 onwards is bang on. Once the port/adapter pattern is in place, the AI stops reinventing your architecture every session and just executes the playbook. I've been seeing the same thing in Laravel projects; the framework already uses this pattern internally through Illuminate\Contracts for mail, cache, queue, the lot. Wrote up how to extend that same approach to your own vendor integrations: https://reading.sh/the-architecture-pattern-that-makes-vendor-lock-in-optional-48e485cb4f03?sk=6928cb411cc2400f69ddb85023cf1f7f

That 842-line session_manager decomposition story hit close to home. PHP codebases do the exact same thing when people scatter vendor SDK calls through controllers.